Nvidia Unveils Blackwell: Touted as the 'Ultimate AI Powerhouse' Chip

The release of the Blackwell platform by Nvidia at their esteemed GTC event captivated audiences with its promise of unparalleled computational might. At the core of Blackwell lies a state-of-the-art GPU, lauded as the “world’s most powerful chip,” which is part of the expansive GB200 NVL72 rack-scale system complemented by a robust suite of enterprise AI tools. Leading cloud service companies are already on board to leverage Blackwell's capabilities, signaling a new era of enhancements in generative AI, deep learning, and the sphere of cloud computing.

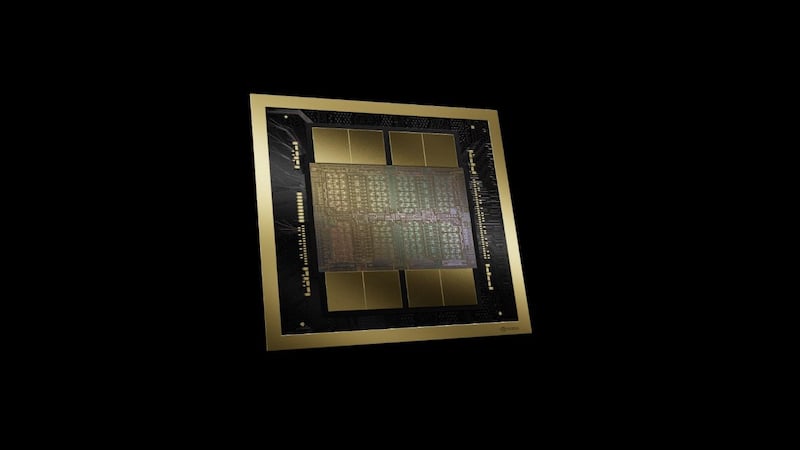

Blackwell GPU in two dies with on-chip HBM3e.

In 2012, the AI landscape was revolutionized by AlexNet, a neural network model harnessing 60 million parameters, thus igniting the surge in GPU-accelerated AI development. Today, the complexity of models has soared to over 10 trillion parameters—a remarkable increase that has spurred an unceasing rise in computational needs. Nvidia's 2024 breakthrough, Blackwell, with its multi-die GPU structure, synergistic CPU co-processors, and Terabyte-scale connectivity, is crafted to meet these intensifying demands head-on.

Nvidia Launches Monumental Blackwell GPU with 208 Billion Transistors

Specifically engineered for the vast computing requirements of generative AI within data centers, Nvidia's Blackwell GPU emerges as the world's most substantial GPU. Boasting an impressive 25-fold enhancement in energy efficiency over previous generations of Nvidia GPUs, the Blackwell is a testament to technological progress and a tribute to David Harold Blackwell, a pioneering figure in statistics and game theory, and the first African American inducted into the National Academy of Sciences. It is set to succeed Hopper, the predecessor in Nvidia's AI architecture lineage.

The Blackwell GPU comprises dual dies, seamlessly integrated via a swift 10 TB/s interconnect, overcoming the once insurmountable reticle size barrier that optical limitations once imposed on high-performance chip development. Equipped with a colossal 208 billion transistors, the Blackwell GPU is fabricated through a specialized TSMC process node.

The Six Pioneering Features of Blackwell Architecture

Detailed in the linked technical brief, Blackwell's architecture heralds six key innovations that underpin its design.

AI Superchip Category

Blackwell stands in a league of its own as an AI superchip, transcending any prior offerings from Nvidia or its rivals. This bold declaration is supported by five other groundbreaking GPU advancements featured in Blackwell.

Next-Gen Transformer Engine

The heart of the Blackwell GPUs is Nvidia's second-generation transformer engine, optimized with a tailor-made tensor core structure. Paired with the innovative TensorRT-LLM and NeMo Framework, this advanced engine boosts training and inference processes for LLM (large language models) and MoE (mixture of experts). New tensor cores introduce finer precision models and community-driven micro-scaling formats, capable of supporting four-bit FP4 calculations, doubling in both size and performance.

Secured AI Computing

Blackwell elevates security to new heights, integrating Nvidia Confidential Computing. This initiative utilizes stringent hardware-oriented security to block unauthorized infiltration, heralding the first TEE-I/O (trusted execution environment for input/output)—a robust GPU capable of TEE-I/O functions. It collaborates with the fifth-generation Nvlink and Nvlink Switch to form a fortified hardware security layer.

Nvlink and Nvlink Switch Innovations

Exascale computing and handling trillion-parameter AI models critically depend on high-caliber communications. The Nvlink interconnect solution is crucial for GPU clusters, supporting up to 576 processors. The switch chip excels with a 130 TB/s GPU bandwidth across a domain of 72 GPUs (NVL72) and enables an interconnect bandwidth of 1.8 TB/s for clusters.

Revamped Decompression Engine

Blackwell GPUs work in unison with Hopper CPUs, significantly boosting data throughput. The new decompression engine achieves 900 GB/s of bidirectional bandwidth, adept at processing a vast array of database queries in numerous compression standards, including LZ4, Deflate, and Snappy.

Enhanced RAS (Reliability, Availability, and Serviceability) Engine

While Blackwell focuses on processing and communication for AI, it also ensures dependable performance through fault tolerance and predictive management, thanks to the RAS engine. This engine contributes to pinpointing potential issues by continuously monitoring extensive hardware and software data points.

Introducing the Blackwell GB200 Superchip and NVL72 Server

The GB200 Superchip epitomizes performance, carrying dual Blackwell GPUs and a single Nvidia Grace CPU. It is outfitted with up to 384 GB of cutting-edge high-bandwidth memory 3e (HBM3e) and can reach a staggering 16 TB/s memory bandwidth. Networks of GB200 units can be interconnected using the new Quantum-X800 and Spectrum-X800 Ethernet technologies, achieving speeds as high as 800 GB/s.

GB200 Superchip with two Blackwell GPUs and one Grace CPU.

Nvidia has unveiled its innovative approach to server technology with the GB200 NVL72. This server configuration amalgamates 36 GB200 superchips, culminating in 72 GPUs, all housed within a liquid-cooled chassis. The GB200 NVL72 functions cohesively as a unified GPU entity, delivering a performance uplift of 30-fold and slashing the total cost of ownership (TCO) by 25 times when measured against its predecessor, the Nvidia H100 server system.

The GB200 NVL72 server with up to 36 GB200 superchips.

No Shortage of Partners

With Nvidia's historical dominance in pioneering GPUs for parallel processing and consistently driving innovation forward, it's hardly shocking to see a multitude of players from the data center and AI sector aligning with the Blackwell platform. Giants like Google/Alphabet are planning to capitalize on Blackwell's potential within their cloud offerings and for projects like Deepmind. Amazon, having forged a strong partnership with Nvidia, aims to incorporate Blackwell into Amazon Web Services (AWS). In turn, Nvidia will maintain its collaborative efforts with Amazon on Project Ceiba, focusing on cutting-edge network and AI research. A cadre of industry heavyweights including Dell, Facebook/Meta, Microsoft, OpenAI, Oracle, and Tesla, have all revealed plans to harness the capabilities of Blackwell for their respective AI initiatives.

All images used courtesy of Nvidia.